Rishi Veerapaneni

PhD student in the Robotics Institute in the School of Computer Science at CMU

Robotics PhD Student

Carnegie Mellon University

Hello! I am a PhD student in the School of Computer Science at Carnegie Mellon University. I work with Professors Maxim Likhachev and Jiaoyang Li and am supported by the NSF Graduate Research Fellowship. Previously, I double majored in EECS and Applied Math at UC Berkeley where I conducted research with Professor Sergey Levine in Berkeley AI Research and was very active in teaching (EE16A, CS188, CS170 x2).

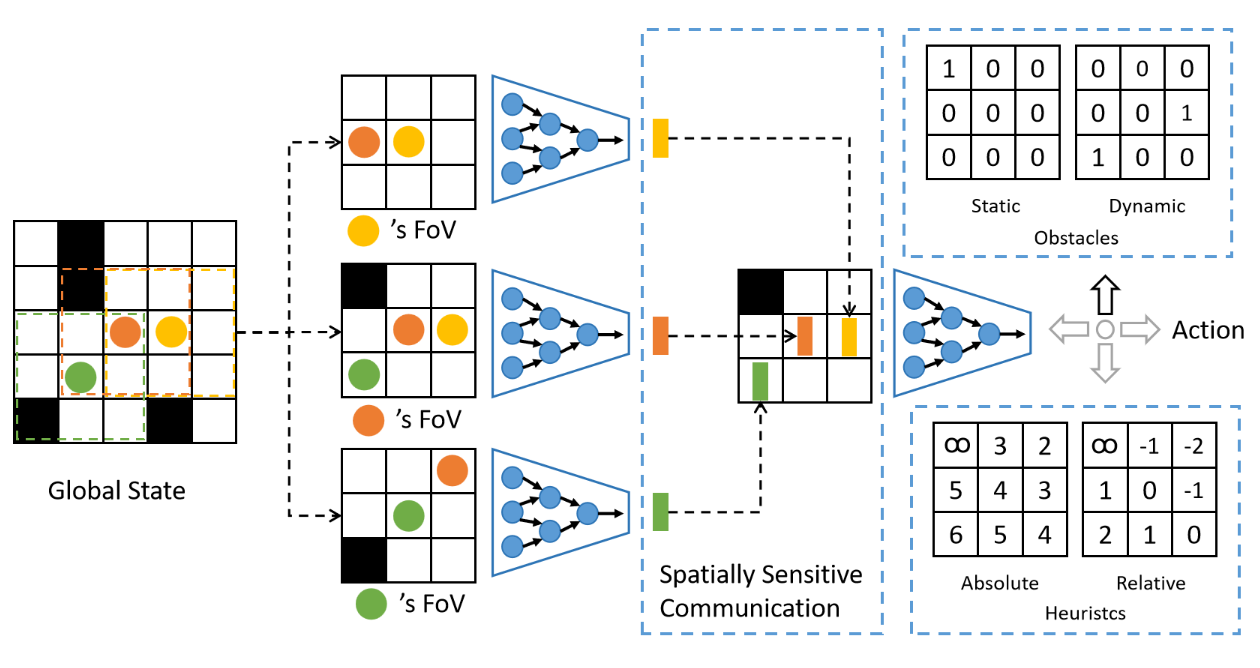

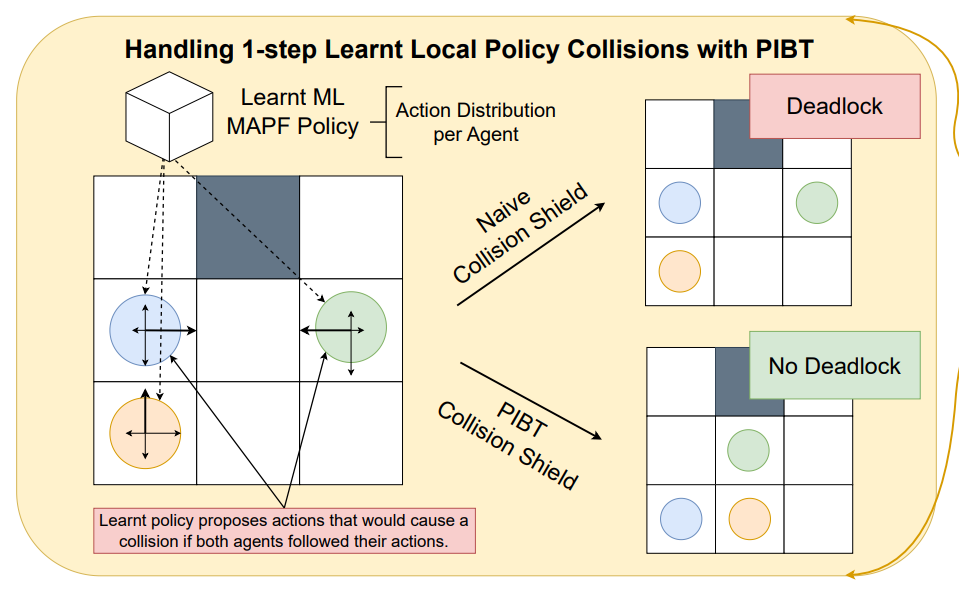

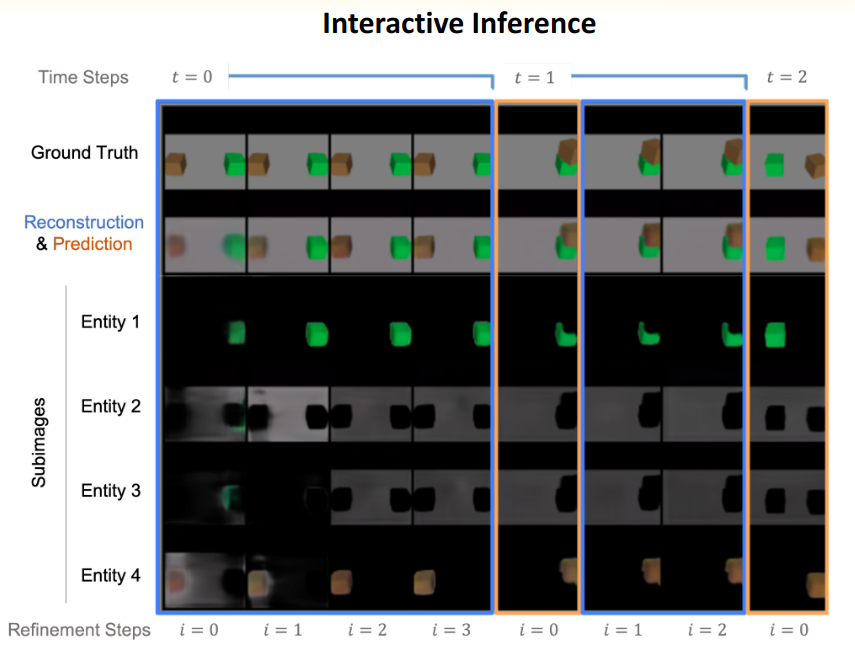

My research develops scalable methods for long-horizon decision making at the intersection of reinforcement learning, imitation learning, and search-based planning. My solutions combine learning with structured planning and leverage test-time compute. While much of my work is motivated by large-scale multi-robot coordination problems, my broader goal is building learning-and-reasoning architectures for complex decision making. My work on scalable imitation learning combined with search received the Best Multi-Agent Systems Paper and Best Student Paper awards at ICRA 2025. Looking ahead, I am particularly interested in working on LLMs, robot learning, or foundation models.